By Dr. Felix Chen · Published May 13, 2026 · Updated May 13, 2026

Quantum entanglement is the part of physics where the math is settled, the experiments are repeated to absurd precision, and the popular framing still gets it sideways. The phrase Einstein left us with, “spooky action at a distance,” has done more to confuse readers than almost any other piece of twentieth-century shorthand. There is no action. Nothing is sent. And yet two photons prepared in a particular joint state, then separated by 1.3 kilometers or 1,200 kilometers or, in one 2022 experiment, by an entire continent, will produce measurement outcomes more strongly correlated than any classical theory of local hidden variables can explain. The 2022 Nobel Prize in Physics, awarded to Alain Aspect, John Clauser, and Anton Zeilinger, recognized this correlation as a closed experimental case [1]. What remains genuinely open is what it tells us about the world. That gap, between settled experiment and unsettled interpretation, is the interesting part.

The Direct Answer: What Entanglement Is, and What It Is Not

Quantum entanglement is a nonlocal correlation between two or more quantum systems whose joint state cannot be written as a product of individual states. Measure one entangled particle and the statistics of measurements on its partner change in ways no classical model can reproduce. It is not faster-than-light signalling, not telepathy, and not a hidden wire. It is correlation without a local hidden cause, and Bell’s inequality is the test that distinguishes the two.

Einstein’s Objection, 1935

In 1935 Albert Einstein, Boris Podolsky, and Nathan Rosen published a four-page paper in Physical Review arguing that quantum mechanics, as then formulated, could not be a complete description of reality [2]. Their reasoning was sharp. If you accept that measurement on one particle instantaneously fixes the state of another distant particle, and you also accept locality, then there must be some local “element of reality” that determines the second particle’s state in advance. Quantum mechanics did not include such elements. Therefore, EPR concluded, the theory was incomplete.

Einstein’s later letters to Max Born sharpened the discomfort into the phrase that stuck: spukhafte Fernwirkung, “spooky action at a distance” [3]. He was not endorsing the spookiness. He was using it as a reductio. Any theory that produced spooky action, in his view, was either incomplete or wrong. For thirty years the question sat in the realm of philosophy. No experiment could distinguish quantum mechanics from a hidden-variable theory that happened to predict the same statistics.

Bell, 1964: Turning Philosophy Into Arithmetic

That changed when John Bell, an Irish physicist at CERN, published a short paper titled “On the Einstein-Podolsky-Rosen Paradox” in Physics Physique Fizika in 1964 [4]. Bell showed that any local hidden-variable theory has to obey a specific inequality on the correlations between measurements made on pairs of particles. Quantum mechanics, for entangled pairs, predicts a violation of that inequality. The disagreement is not philosophical. It is a number, and the number is different.

The cleanest form of Bell’s result, the CHSH inequality formulated by Clauser, Horne, Shimony, and Holt in 1969, bounds a particular sum of correlations S at S ≤ 2 for any local realist theory. Quantum mechanics predicts a maximum of 2√2 ≈ 2.828 for the singlet state |Ψ−⟩ = (|01⟩ − |10⟩)/√2. The whole experimental program of the last half-century has been measuring that S with cleaner and cleaner setups, closing one assumption after another, and watching the number land between 2.4 and 2.7 every time.

What the inequality actually rules out

Violating Bell’s inequality does not prove quantum mechanics is correct in every detail. It proves that at least one of three assumptions Einstein took as obvious must be wrong: locality (no influence travels faster than light), realism (particles have definite properties before measurement), or freedom of choice (the experimenter’s settings are not predetermined by the same hidden variables as the particles). Most physicists abandon a version of realism. A small minority abandon freedom of choice and call themselves superdeterminists. Almost nobody seriously abandons locality in the signalling sense, because no experiment has ever sent a usable bit faster than light.

Aspect, 1982, and the Loopholes

The first experiments to take Bell’s idea seriously were run by Stuart Freedman and John Clauser at Berkeley in 1972 [5]. The decisive result came a decade later from Alain Aspect’s group at the Institut d’Optique in Orsay, France, in three papers published in 1981 and 1982 [6]. Aspect used pairs of photons emitted from calcium atoms and measured their polarization correlations at angles chosen during the photons’ flight, so no slower-than-light signal between the two detectors could carry information about the settings. The data violated the CHSH inequality at roughly 40 standard deviations.

Critics noted that Aspect’s setup still had loopholes. The detection efficiency was low enough that one could imagine a hidden-variable model in which only a biased sample of pairs got detected. The setting choices were periodic and might not have been independent of the particles. Closing every loophole at once took another thirty years.

The 2015 loophole closures

In 2015 three independent groups closed the remaining loopholes within months of one another. Ronald Hanson’s group at TU Delft used entangled electron spins in two nitrogen-vacancy diamond defects separated by 1.3 kilometers across the Delft campus. After 220 hours of running and 245 useful trials, the group measured S = 2.42 ± 0.20, with a probability of 0.039 that any local realist model could have produced data that strong [7]. Marissa Giustina’s group in Vienna and Lynden Shalm’s group at NIST achieved similar closures with entangled photons and high-efficiency detectors that same year [8]. The 2018 BIG Bell Test recruited about 100,000 human volunteers playing an online game to generate the setting choices, closing the freedom-of-choice loophole with biological randomness [9].

Why No Information Travels Faster Than Light

This is the point where the popular framing collapses, and it is worth being precise. When Alice measures her half of an entangled pair, she gets a random outcome, 0 or 1 with equal probability. Bob’s measurement on his half also gives a random outcome with equal probability. The correlation only appears when Alice and Bob bring their result sheets together and compare. To do that comparison they need a classical channel: a phone call, an email, a radio signal. That channel is bound by relativity. So no usable information has moved between them faster than light, because each separately sees only noise.

The no-communication theorem makes this precise. The reduced density matrix on Bob’s side does not depend on what Alice measures or whether she measures at all [10]. Anyone who tells you entanglement enables instantaneous communication is either selling something or has not done the calculation. Carlo Rovelli’s Helgoland is good on this point; so is Sean Carroll’s Something Deeply Hidden.

What Entanglement Is Actually Good For

Once you accept that entanglement is real and not signalling, the engineering question becomes interesting. Three applications have moved from theory to deployed hardware.

- Quantum key distribution (QKD). The BB84 protocol from Bennett and Brassard, and the entanglement-based E91 protocol from Artur Ekert, exploit the fact that any eavesdropper must measure, and any measurement disturbs the state in a detectable way. China’s Micius satellite, operated by Jian-Wei Pan’s group at USTC, demonstrated entanglement-based QKD over 1,200 kilometers in 2017 and intercontinental key exchange in 2018 [11].

- Quantum teleportation. Charles Bennett and collaborators proposed in 1993 that an unknown quantum state can be reconstructed at a distant location using a shared entangled pair plus two classical bits [12]. The Zeilinger group experimentally demonstrated photon-state teleportation in 1997 and has extended the technique to ground-to-satellite links.

- Quantum computing. Entanglement between qubits is the resource that gives quantum algorithms their scaling advantage over classical ones. The current generation of superconducting processors from IBM and Google maintain entanglement across hundreds of qubits, though noise and decoherence still set the practical ceiling.

What Entanglement Is Not

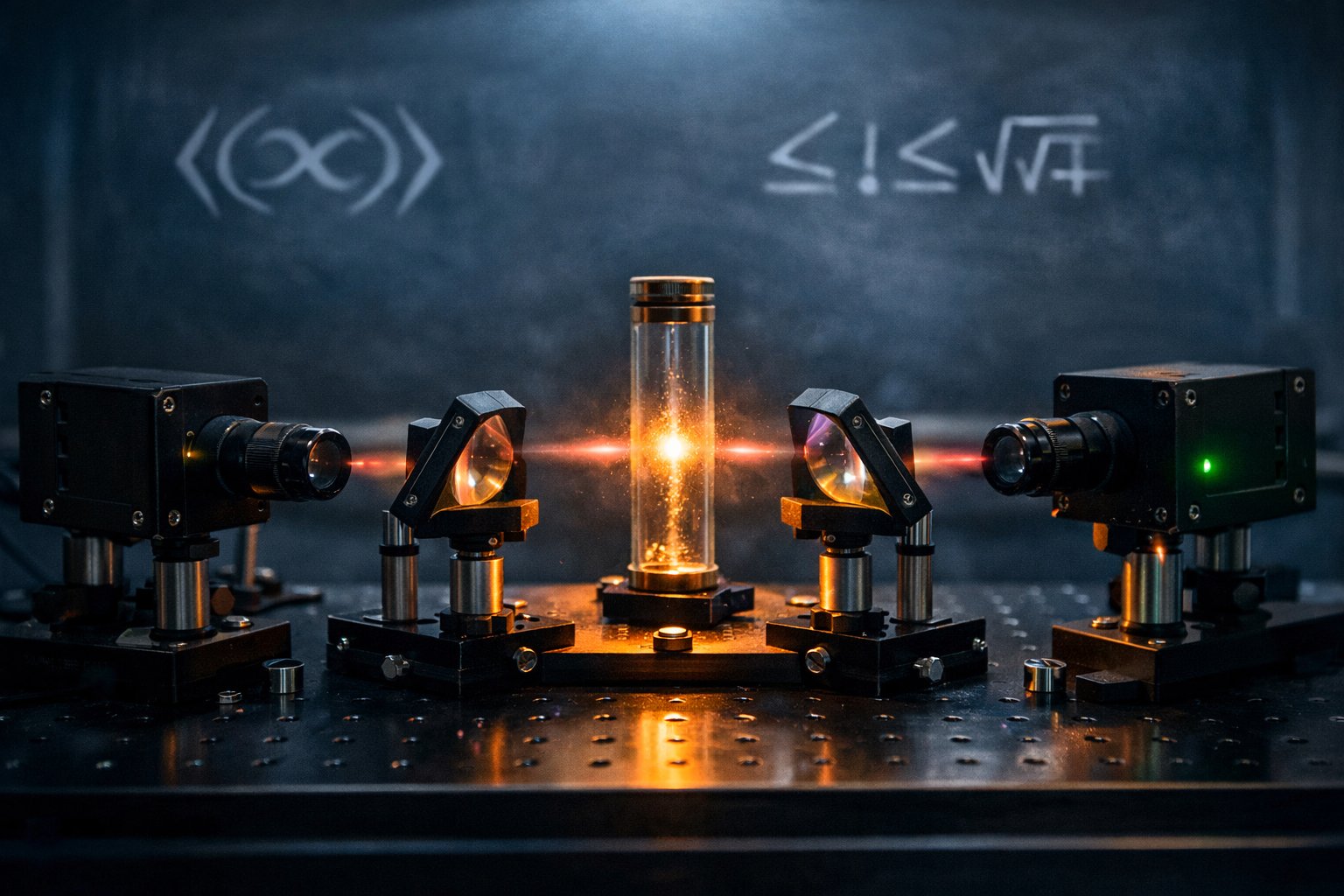

A separate body of writing claims that quantum entanglement explains consciousness, healing, prayer, the feeling of being watched, or the connectedness of all things. None of this follows from the physics. Entanglement is fragile. It decoheres on timescales of microseconds in warm wet environments, which is what brain tissue is. Roger Penrose and Stuart Hameroff have proposed a specific (and contested) microtubule model that at least makes a falsifiable claim; most of the popular “quantum consciousness” literature does not [13]. The serious work happens in laboratories with dilution refrigerators, single-photon detectors, and the discipline to publish null results.

The genuine mystery is sharper and more interesting than the bumper-sticker version. The mathematics of entanglement is unambiguous. The experimental record is closed. What we still do not know is which interpretation of quantum mechanics, if any, captures the underlying ontology. Many-worlds keeps the wave function and abandons collapse. QBism keeps the agent and abandons the universal wave function. Pilot-wave theory keeps realism and accepts genuine nonlocality. Relational quantum mechanics keeps locality and makes properties facts about interactions, not about objects. The experiments do not yet pick a winner.

The Honest Frontier

Three lines of current work are worth watching. The first is device-independent cryptography, where a security proof is built only from observed Bell violations rather than from assumed device behavior. The second is entanglement at scale, including efforts to entangle macroscopic objects (kilogram-scale mirrors in gravitational-wave detectors) to probe whether gravity itself is quantum or classical [14]. The third is the foundational program, including Bertlmann’s reviews of Bell’s theorem and the steady refinement of what exactly the experimental violations imply about reality [15].

Einstein’s “spooky action” was a goad to a generation of experimentalists. The data has now come in. The action is not spooky, because it is not action. But the correlations are real, they are nonlocal in the specific technical sense Bell defined, and they are doing useful work in cryptography, computing, and the foundational arguments still under way. The right register, as always, is wonder kept honest by arithmetic. The arithmetic is in, and the wonder is justified.

Adjacent reading in science and natural anomalies: The Spring 2026 Earthquake Swarm Cluster: Brawley, NTTR, and Kanosh and 3I/ATLAS Chemistry: Reading the Nature Astronomy Deuterium Paper.